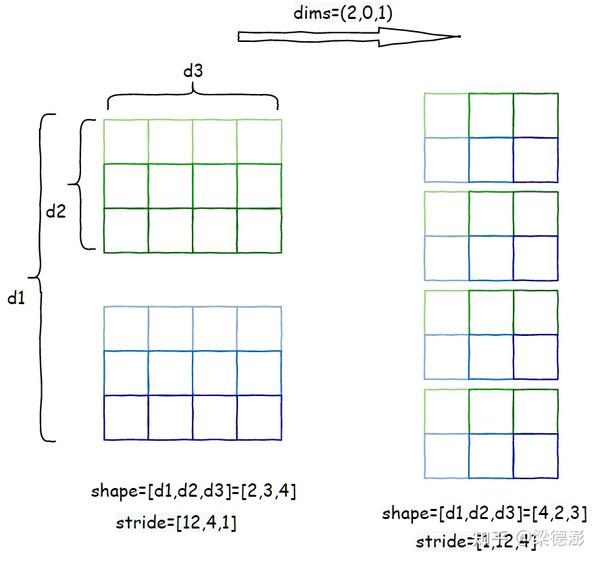

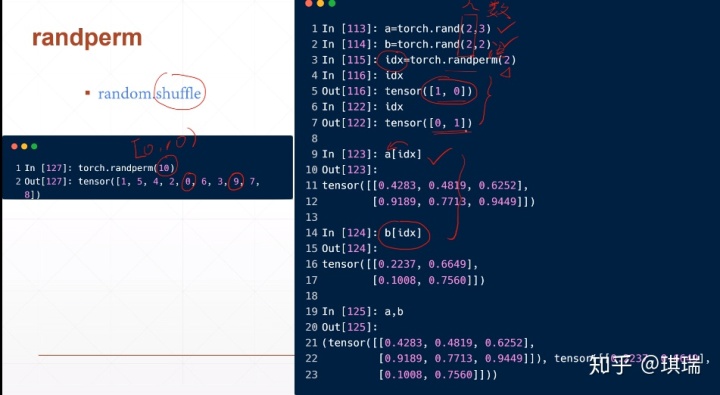

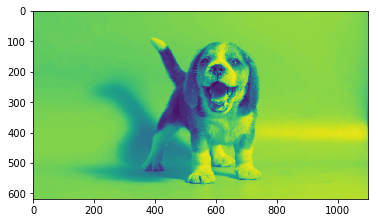

This function is equivalent to NumPy’s moveaxis function. At some point, you will have to convert between raw data (for example: images) and a proper torch::Tensor and back. Converting between raw data and Tensor and back. Torch.moveaxis(input, source, destination) → Tensor Some of this stuff is hardly documented, but you can find some information in the class reference documentation of torch::Module. Additionally, it is important to consider the data type of the tensor, as this can affect the performance of the torch.moveaxis() function. PyTorch torch.permute () rearranges the original tensor according to the desired ordering and returns a new multidimensional rotated tensor. When using these functions, it is important to consider the number of axes and their positions, as well as the shape of the tensor. I think in Pytorch the way of thinking, differently from TF/Keras, is that layers are generally used on some process that requires some gradients, Flatten(), Reshape(), Add(), etc are just formal process, no gradients involved, so you can just use helper functions like the ones in torch.nn.functional. of shape CxNxF (channels by rows by features), then you can shuffle along the second dimension like so: dim1 idx torch.randperm (t. Additionally, the torch.movedim() function is an alias for the torch.moveaxis() function, and the torch.permute() function can be used to move axes to new positions while keeping the other axes in their original order. This is why it is faster to flatten tensors inside forward. of 7 runs, 100000 loops each) This result shows creating a class would be slower approach. It can be used to solve various problems such as rearranging tensors, reshaping tensors, and more. permute is quite different to view and reshape: View vs. flatten Flatten () t torch.Tensor (3,2,2).random (0, 10) timeit fflatten (t) 5.16 µs ± 122 ns per loop (mean ± std. Would be great to hear from anyone who has run into the same issue.The torch.moveaxis() function in PyTorch allows you to move axes to new positions while keeping the other axes in their original order. For now one can simply add another line like RUN pip install -upgrade torchĪt the end of the Dockerfile so you don’t have to wait for a new install every time you launch a containerized job. The real fix is to update the default PyTorch version that comes with the Dockerfile. Each has been recast in a form suitable for.

I suspect this is a fixed bug in PyTorch and we simply need to use a newer version of it. This module implements a number of iterator building blocks inspired by constructs from APL, Haskell, and SML. The lazy workaround I’m using is to run pip install -upgrade torch inside the container, which gets rid of this error. I am guessing that you’re converting the image from h x w x c format to c x h x w.

The irony is that tries to redirect to the function for more information, but (because it does not exist) it cannot link to it, as evident here. With torch_deterministic=False, I don’t get this error at all and everything runs as expected.įor reference, the node I was allocated uses Tesla P100. This is the issue the mask is 2-dimensional, but you’ve provided 3 arguments to mask.permute(). When I search for the permute function (torch.permute) I can only find the method (). RuntimeError: number of dims don't match in permute I got this error message: File "./IsaacGymEnvs/isaacgymenvs/tasks/humanoid_amp.py", line 290, in _set_env_state I used this Singularity image to run HumanoidAMP training with torch_deterministic=True. I used the vanilla Dockerfile from Preview 4, whose image I’ve converted to Singularity SIF file on cluster. W, C) -> (C, H, W) tensor tensor.permute(2, 0, 1) elif len(inputshape) 4: (B, H, W. loc.append(l(x).permute(0, 2, 3, 1). from typing import Optional import numpy as np import torch. Inside ssd.py at this line there is a call to contiguous() after permute().

It seems that the default PyTorch version installed with the pre-packaged Dockerfile doesn’t like tensor entry assignments. I am trying to follow the pytorch code for the ssd implementation (Github link).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed